Using Generative AI to Automate Pediatric Audiology Diagnoses

Overview

The California Polytechnic State University, San Luis Obispo (Cal Poly) Digital Transformation Hub (DxHub), powered by Amazon Web Services (AWS), has collaborated with the Public Health Informatics Institute in a project involving Mass Eye and Ear, Boston Children’s Hospital and Massachusetts Universal Newborn Hearing Screening (UNHS) Program to build an innovative solution that leverages generative artificial intelligence (AI) to automate the classification of pediatric audiology reports.

Audiologists across the U.S. are required to report infants and children that are Deaf or Hard of Hearing (DHH) to state health departments so those children can be referred for treatment as early as possible. Unfortunately, this reporting process is often burdensome, requiring audiologists to perform double data entry into a state system, and to transform multiple tests into a single set of fields quantifying the patient’s hearing status. This project demonstrates how generative AI, paired with reasoning logic and human in-the-loop validation can streamline these workflows while maintaining the scientific rigor required.

Problem

Early Hearing Detection and Intervention (EHDI) programs aim to screen infants who are deaf or hard of hearing by 1 month, diagnose by 3 months, and make a referral to early intervention services by 6 months. Timely and accurate reporting of pediatric hearing loss is essential for connection to intervention and supporting positive developmental outcomes. However, audiologists are often asked to complete duplicative data entry and conduct manual processes to report this important information to public health agencies. This may cause delays in treatment and more critically, infants and young children, who are lost to follow-up and do not receive the services they need.

Early Hearing Detection and Intervention (EHDI) programs aim to screen infants who are deaf or hard of hearing by 1 month, diagnose by 3 months, and make a referral to early intervention services by 6 months. Timely and accurate reporting of pediatric hearing loss is essential for connection to intervention and supporting positive developmental outcomes. However, audiologists are often asked to complete duplicative data entry and conduct manual processes to report this important information to public health agencies. This may cause delays in treatment and more critically, infants and young children, who are lost to follow-up and do not receive the services they need.

Addressing this requires more than data extraction, it demands intelligent, automated diagnostic reasoning. Systems must recognize patterns, apply precise rules consistently, and produce explainable, trustworthy outcomes that clinicians can validate. Manual review results in delays with resulting negative impacts on infant and children receiving the services they need. This project aimed to bridge that gap by developing an automated, transparent, and precise system to capture the required public health reporting information from narrative reports generated by audiologists as part of the normal working processes. This translates to alleviatiate the burden of audiologists in reporting to state public health agencies, and more timely treatment for infants and children that are Deaf or Hard of Hearing (DHH).

Innovation In Action

To address the challenges of interpreting complex pediatric hearing data and ensuring diagnostic consistency across institutions, the DxHub developed an automated, AI-powered classification system that leverages Amazon Bedrock. This solution was designed to support audiologists and researchers by transforming free-text reports and audiometric test results into structured, rule-based classifications with traceable clinical reasoning.

To address the challenges of interpreting complex pediatric hearing data and ensuring diagnostic consistency across institutions, the DxHub developed an automated, AI-powered classification system that leverages Amazon Bedrock. This solution was designed to support audiologists and researchers by transforming free-text reports and audiometric test results into structured, rule-based classifications with traceable clinical reasoning.

The tool takes audiology data from various institutions and runs it through a configurable logic engine that reflects each organization’s specific clinical rules and reporting requirements. This logic is embedded in a centralized configuration file that defines templates, risk factors for deafness or hearing loss, and acceptable values unique to each clinic or study. The system then generates structured prompts and sends them through Amazon Bedrock’s Claude Sonnet models using batch inference or API calls, depending on the data volume.

The innovation stands out for its ability to scale while maintaining diagnostic accuracy. The system applies detailed classification rules, such as how to prioritize sensorineural versus conductive hearing loss or how to flag auditory neuropathy, and ensures that each diagnosis includes both left and right ear assessments, severity levels, risk factor tagging, and an explanation grounded in clinical guidelines.

One of the most powerful features is its ability to provide transparent reasoning for each output. Audiologists reviewing results can trace how decisions were made, with cited rule numbers and logical steps clearly documented. This makes the tool especially valuable not just for automation, but for training, auditability, and quality assurance.

Technical Solution

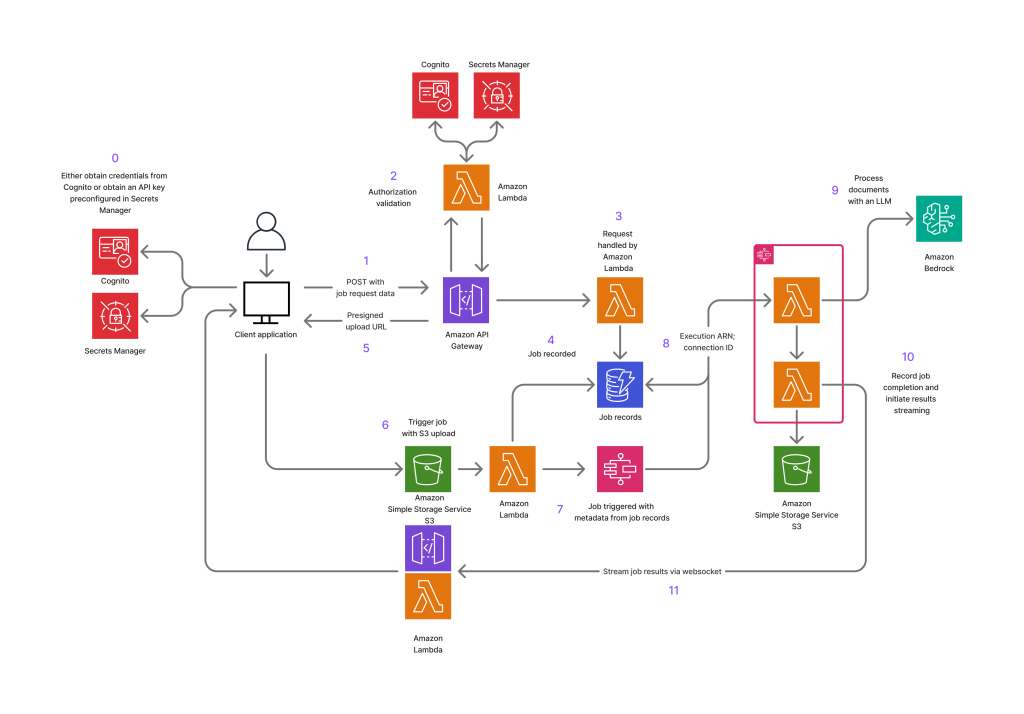

The technical architecture of the audiology classification prototype was designed with scalability, automation, and clinical rigor in mind. At its core, the system is built around a fully serverless AWS pipeline that leverages the power of Amazon Bedrock batch inference and a Python-driven logic engine abstracted via a config.json file.

The workflow begins when a user uploads a raw JSON file containing multiple de-identified hearing reports and audiometric test results to a designated S3 input bucket. A Python script automatically reads the file, parses each patient entry, and dynamically generates few-shot classification prompts. Each prompt includes the full hearing report, the structured audiometric results, and institution-specific templates and rule sets.

These templates and rules are not hard-coded; instead, they are maintained in a centralized config.json file. This file acts as a logic engine that defines the expected structure of output (template), the allowed values per field (validation layer), and the rules the large language model (LLM) must follow to classify hearing loss. This design allows the same codebase to serve multiple institutions, each with its own unique clinical and data needs. In the end the model is instructed to reason only with the explicitly provided data and to cite classification guidelines in its output.

The results are saved to a separate S3 bucket then the system downloads and processes this file, using a custom-built JSON parser that can repair malformed responses and extract clean, structured JSON objects from each LLM output. These are converted into institution-specific CSVs, using logic defined in a configuration file.

This solution is being piloted with Massachusetts Eye and Ear, Boston Children’s Hospital and Massachusetts UNHS program is available to others who are interested in the solution.

Supporting Documents

| Source Code | All of the code and assets developed during the course of creating the prototype. |

About the DxHub

The Cal Poly Digital Transformation Hub (DxHub) is a strategic relationship with Amazon Web Services (AWS) and is the world’s first cloud innovation center supported by AWS on a University campus. The primary goal of the DxHub is to provide students with real-world problem-solving experiences by immersing them in the application of proven innovation methods in combination with the latest technologies to solve important challenges in the public sector. The challenges being addressed cover a wide variety of topics including homelessness, evidence-based policing, digital literacy, virtual cybersecurity laboratories and many others. The DxHub leverages the deep subject matter expertise of government, education, and non-profit organizations to clearly understand the customers affected by public sector challenges and develop solutions that meet the customer needs.